Cursor Automations turns AI agents into background processes

Written by Joseph Nordqvist/March 6, 2026 at 1:15 PM UTC

6 min read

Agentic coding tools have gotten genuinely fast. The problem is everything around them hasn't. Code review, security audits, test coverage, incident response still move at roughly the speed of a human remembering to start them. And the more code agents produce, the bigger that gap gets.

Cursor's answer, launched Thursday, is to stop waiting for humans to remember. The company introduced Automations: a system that lets AI agents run continuously in the background, triggered by real events in a development pipeline (a PR opening, a PagerDuty alert, a Slack message, a timer) without anyone typing a prompt first.

The maintenance tax

It's worth being precise about what Cursor is actually selling here, because it's not what the agentic coding headlines usually say.

This isn't about writing more code faster. It's about handling what Cursor calls "the other parts of the development lifecycle," that is the review, monitoring, and maintenance work that has piled up while code generation sprinted ahead.

It’s a different problem, and arguably a more interesting one to solve. Code generation is becoming commoditized fast. The maintenance layer on top of it isn't.

Cursor engineer Jon Kaplan put it plainly in the video announcement: "As agents have gotten really capable at handling work autonomously, we found ourselves kicking them off over and over again for the same type of task. So we thought, why not automate that?"

The company says it has been running Automations internally for several weeks and now runs hundreds per hour across its own codebase. The examples it has shared cover the things engineering teams perpetually deprioritize:

Security auditing runs on every push to main, specifically so the agent can take more time finding nuanced issues without blocking the review process.

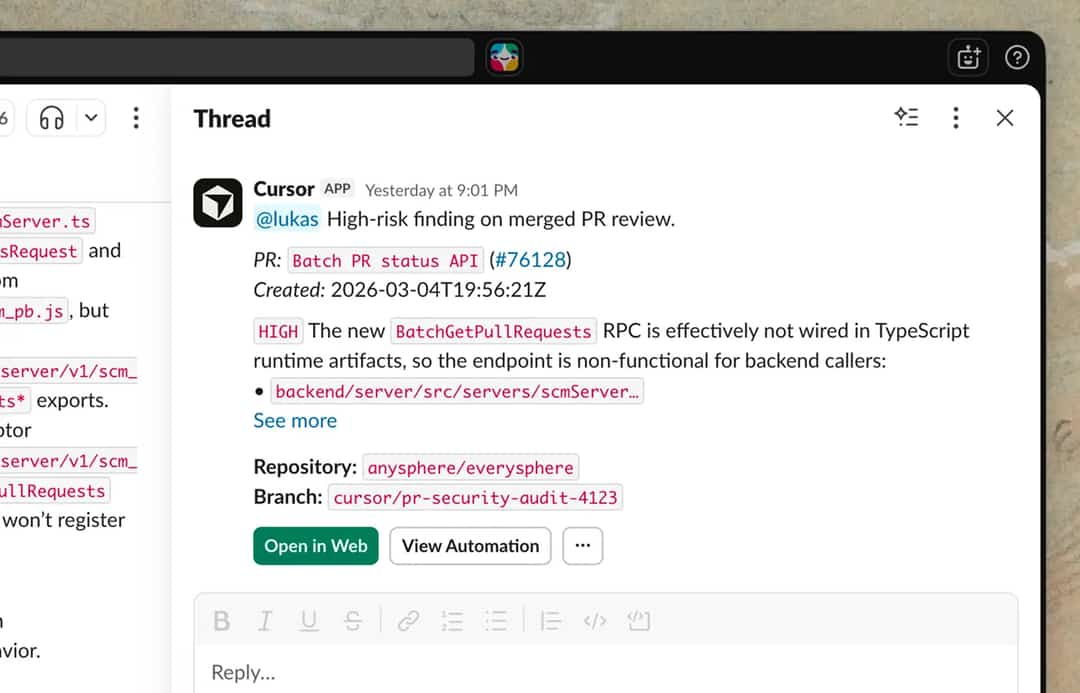

PR risk triage runs on every PR open or push, classifying risk based on blast radius, complexity, and infrastructure impact. Low-risk PRs get auto-approved. Higher-risk ones get up to two reviewers assigned based on contribution history. Every decision is summarized in Slack and logged to a Notion database via MCP so the team can audit the agent's work and tweak the instructions.

Incident response triggers when PagerDuty fires. An agent uses the Datadog MCP to dig into logs, checks the codebase for recent changes, and sends on-call engineers a Slack message with the corresponding monitor message and a PR containing the proposed fix.

Test coverage runs every morning: an agent reviews recently merged code, identifies areas that need test coverage, writes tests following existing conventions, runs the relevant test targets, and opens a PR. It only alters production behavior when necessary.

Bug triage watches a Slack channel for incoming bug reports, checks for duplicates, creates an issue via Linear MCP, investigates the root cause in the codebase, attempts a fix, and replies in the original thread with a summary.

One of the more surprising use cases the Cursor engineers mentioned: Cursor pipes community feature requests from X into a Slack channel, then triggers an agent on each one. "Some of these features, if they're simple, are actually just getting implemented asynchronously," Pertschuk said.

What it looks like in practice

The announcement includes an external case study from Rippling. Abhishek Singh, an engineer there, built a personal dashboard: he dumps meeting notes, action items, Loom links, and TODOs into a Slack channel throughout the day, and a scheduled agent runs every two hours to read all of it alongside his GitHub PRs, Jira issues, and Slack mentions, deduplicate across sources, and post a clean dashboard. Rippling has since extended the pattern to incident triage, weekly status reports, and on-call handoffs. Senior staff engineer Tim Fall summarized the appeal: "Automations have made the repetitive aspects of my work easy to offload."

That's a real workflow and it illustrates something the feature list alone doesn't: the value is that the same trigger-and-run framework handles everything from serious incident response to "please compile my notes."

One thing the docs say that the announcement doesn't

The Automations documentation includes a caveat that didn't make it into the blog post: the memory tool — the feature that lets agents learn from past runs — "should be used with caution if your automation handles untrusted input. Inputs may lead to misleading or malicious memories that unintentionally impact future automation runs."

That's a real consideration for any team routing external bug reports or Slack messages from outside their organization into automations. The agents learn from what they see. If what they see is adversarial, they learn from that too. It's not a dealbreaker, but it's worth knowing before connecting a public-facing Slack channel to an automation with memory enabled.

Where this fits for Cursor right now

Cursor's annual revenue reportedly passed $2 billion earlier this week, doubling over the prior three months, according to Bloomberg. The company raised $2.3 billion at a $29.3 billion valuation last November. By most measures it's the dominant player in AI coding tools.

But the competitive terrain has shifted. Anthropic's Claude Code and OpenAI's Codex are both pushing hard on agentic coding, and both come backed by organizations whose core competency is building the underlying models. Cursor builds on top of those models. That's a structural difference that matters when the model labs decide to compete directly on the same ground.

Automations is a reasonable response to that pressure: it's not a bet on having better models, it's a bet on having better workflows. The question is whether workflow tooling compounds into a durable advantage, or whether it gets absorbed into every platform eventually.

Software development looks very different than it did a year ago. In the video, Kaplan put it plainly: "With more software, with more output, you then have more stuff you need to review, more issues you need to triage, more things to manage around software. But a lot of these things are automatable."

Written by

Joseph Nordqvist

Joseph founded AI News Home in 2026. He studied marketing and later completed a postgraduate program in AI and machine learning (business applications) at UT Austin’s McCombs School of Business. He is now pursuing an MSc in Computer Science at the University of York.

View all articles →This article was written by the AI News Home editorial team with the assistance of AI-powered research and drafting tools. All analysis, conclusions, and editorial decisions were made by human editors. Read our Editorial Guidelines

References

- 1.

Build agents that run automatically — Jack Pertschuk, Jon Kaplan & Josh Ma, Cursor, March 5, 2026

Primary - 2.

- 3.

Was this useful?