Gemma 4 on an iPhone: here's what a 2B model can actually do

Written by Joseph Nordqvist/

5 min read

Google released Gemma 4 on April 2; four open models under Apache 2.0. The smallest, E2B, is 2.54 GB and it can run entirely on your phone. No internet, no API key, no account.

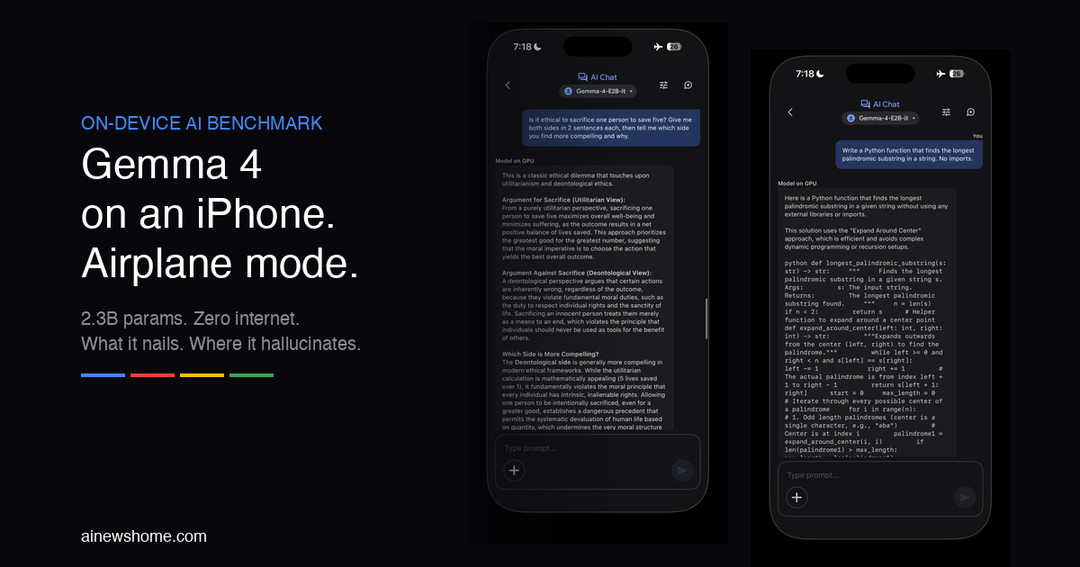

I downloaded it through the Google AI Edge Gallery app, turned on airplane mode, and started throwing prompts at it.

What it gets right

I ran seven tests in airplane mode. The reasoning tasks were surprisingly strong.

A Bayesian probability problem: three boxes, colored balls, what's the probability you picked Box A given you drew red? It produced a complete, correct proof. Prior probabilities, law of total probability, Bayes' theorem, right answer (2/3). With LaTeX rendering. On a phone. 18.9 seconds.

A coding prompt: write a longest palindromic substring function, no imports. Clean O(n²) expand-around-center algorithm, typed annotations, edge cases handled, test examples included. 30.1 seconds for the whole thing.

An ethics question: is it ethical to sacrifice one to save five, pick a side. It laid out utilitarian vs. deontological arguments, chose deontological, and defended the choice. No hedging, no "as an AI I can't." 8.9 seconds.

I also sent a photo of my cat through the vision feature. It nailed the description (pose, setting, lighting, mood) but called my Bengal cat a "tabby." 9.9 seconds.

| Test | Category | Prompt | Time | Verdict |

|---|---|---|---|---|

| Factual Recall | Knowledge | Explain quantum entanglement in 3 sentences. | 2.7s | Accurate and concise. |

| Bayesian Reasoning | Math | 3 boxes with colored balls — what's the probability you picked Box A given you drew red? | 18.9s | Correct answer (2/3). Full step-by-step Bayesian proof. |

| Code Generation | Coding | Write a Python function that finds the longest palindromic substring. No imports. | 30.1s | Correct O(n^2) expand-around-center algorithm with annotations and examples. |

| Ethical Reasoning | Reasoning | Is it ethical to sacrifice one person to save five? Pick a side. | 8.9s | Picked deontological, defended it with reasoning. No hedging. |

| Image Recognition | Vision | Photo of a Bengal cat — describe this animal. | 9.9s | Excellent visual description but called the Bengal a 'tabby'. Strong reasoning, weak breed ID. |

| History (Factual) | Knowledge | Write about the fall of the Western Roman Empire with emperors, dates, events. | 1m 24s | 7 factual errors. Invented an emperor, reversed Vandal migration, wrong dates. Good structure, bad facts. |

| Raw Speed | Performance | Count from 1 to 20, one number per line. | 1.9s | ~10 tok/s on an iPhone 16 Pro in airplane mode. |

What it gets wrong

I asked it to write about the fall of the Western Roman Empire with specific emperors, dates, and events.

The structure was great; four chronological phases, clear cause-and-effect, proper essay format. But it invented an emperor ("Marcus Didius Julius Caesar"), put Caracalla in the wrong century, reversed the direction of the Vandal migration, called Odoacer a "kingmaker" instead of King of Italy, and left out Theodosius I, Alaric's sack of Rome, and Attila the Hun entirely. Seven factual errors in one answer.

The pattern across all the tests is consistent: strong reasoning, weak recall. It can think through a Bayesian proof but can't remember which emperor ruled when. It can describe a cat in detail but can't identify the breed. The model learned how to reason, not what to know.

This tracks with Google's own numbers. E2B scores 60% on MMLU Pro (a knowledge benchmark) — a 25-point gap below the 31B model. Google's model card says directly: these models "are not knowledge bases."

It also explains why the app has an Agent Skills feature. When you're online, it can query Wikipedia and call external APIs to fill in the gaps. Offline, you get the reasoning engine without the encyclopedia.

The specs

Model | Active / Total Params | Context | MMLU Pro | Modalities |

|---|---|---|---|---|

E2B | 2.3B / 5.1B | 128K | 60.0% | Text, Image, Audio |

E4B | 4.5B / 8B | 128K | 69.4% | Text, Image, Audio |

26B A4B (MoE) | 3.8B / 25.2B | 256K | 82.6% | Text, Image |

31B (Dense) | 30.7B | 256K | 85.2% | Text, Image |

The "E" in E2B stands for "effective" — the active parameter count during inference. The total is higher (5.1B) because of Per-Layer Embeddings, which give each decoder layer its own small embedding lookup table. Combined with 2-bit and 4-bit quantization, this lets the model run in under 1.5 GB of memory on supported devices. The whole stack runs on LiteRT-LM, Google's open-source inference framework.

The bottom line

Gemma 4 E2B is not replacing cloud AI. It hallucinates facts, it can't access current information, and Google says as much in the model card.

But a 2.54 GB file on your phone just solved a Bayesian probability problem, wrote a correct algorithm, argued ethics, and described a photo.. all in airplane mode, all on-device, all with zero data leaving the device. For code help, drafting, math, and structured thinking, it works.

Transparency

All tests ran on an iPhone in airplane mode using Google AI Edge Gallery with Gemma-4-E2B-it (2.54 GB). Response times are as reported by the app. Screenshots are unmodified. The Roman Empire answer was fact-checked against primary historical sources. Claude Opus 4.6 assisted with interactive component development.

Editorial Transparency

This article was produced with the assistance of AI tools as part of our editorial workflow. All analysis, conclusions, and editorial decisions were made by human editors. Read our Editorial Guidelines

References

- 1.

Gemma 4 model card, Google AI for Developers, April 2, 2026

Parameter counts, benchmarks, modalities, and known limitations.

Primary - 2.

Gemma 4: Byte for byte, the most capable open models, Google Blog, April 2, 2026

Official announcement of Gemma 4 under Apache 2.0.

Primary - 3.

Bring state-of-the-art agentic skills to the edge with Gemma 4, Google Developers Blog, April 2, 2026

LiteRT-LM, on-device performance numbers, Agent Skills.

Primary - 4.

Welcome Gemma 4: Frontier multimodal intelligence on device, HuggingFace Blog, April 2, 2026

LMArena Elo scores, performance-vs-size chart.

- 5.

google/gemma-4-E2B-it, HuggingFace, April 2, 2026

Model card with specs, usage examples, and benchmarks.

- 6.

- 7.

Was this useful?

More in Models

View all- Gemma 4 E4B benchmark: 53 tests on a MacBook Pro M4 (24GB)5d ago

- Karpathy says LLM memory features "trying too hard"March 26, 2026

- Moonshot AI proposes new method for how LLM layers share information, claims 1.25x compute advantageMarch 16, 2026

- How ChatGPT and AlphaFold helped an Australian engineer design a personalized cancer vaccine for his dogMarch 16, 2026

Related stories

Google releases Gemini 3.1 Flash-Lite

Google has released a preview of Gemini 3.1 Flash-Lite, positioning it as the fastest and most cost-efficient model in the Gemini 3 series.

March 3, 2026

ModelsAlibaba launches Qwen 3.5 small model series with sub-1B edge options

Alibaba's Qwen team has released four new small language models — Qwen3.5-0.8B, 2B, 4B, and 9B — alongside their base model counterparts, extending the Qwen3.5 architecture to compact, resource-efficient deployments.

March 2, 2026

IndustryClaude hits #1 on the US App Store after Pentagon dispute

After the Pentagon banned Anthropic for refusing to allow Claude to be used for mass surveillance and autonomous weapons, users responded by making Claude the #1 app on the US App Store, overtaking ChatGPT for the first time.

March 1, 2026

ModelsGemini 3.1 Pro claims top-tier reasoning gains

Google has released Gemini 3.1 Pro, a new version of its Pro model line that it says upgrades the core reasoning capabilities behind recent Gemini 3 advances.

February 19, 2026