Karpathy says LLM memory features "trying too hard"

Written by Joseph Nordqvist/March 26, 2026 at 5:50 AM UTC

3 min read

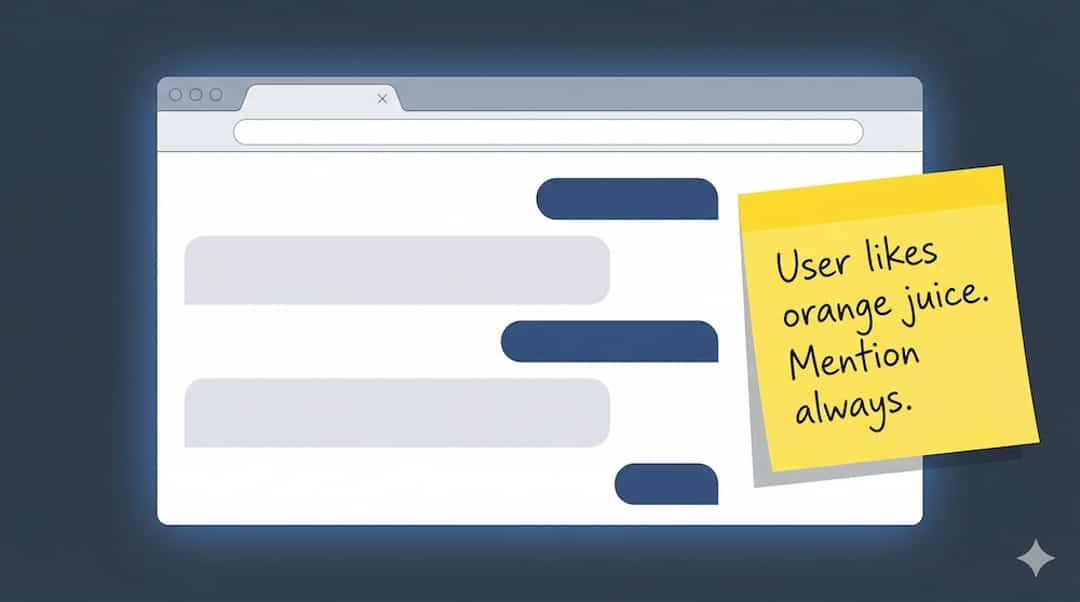

Every major AI chatbot now remembers things about you. OpenAI's ChatGPT, Anthropic's Claude and Google's Gemini all store details from past conversations and use them to personalize future responses. In a pair of posts on X on March 25, Andrej Karpathy noted that the memory feature has an issue: models can treat stale, one-off queries as deep ongoing interests.

"One common issue with personalization in all LLMs is how distracting memory seems to be for the models," Karpathy wrote. "A single question from 2 months ago about some topic can keep coming up as some kind of a deep interest of mine with undue mentions in perpetuity. Some kind of trying too hard".

The first post drew 15,000 likes and 1.5 million views within its first day.

Users recognize the pattern

The thread quickly filled with specific examples of the behavior Karpathy described. One user reported that a background in quantitative finance caused their chatbot to shoehorn financial concepts into unrelated conversations. "No you don't need to frame my kids education in terms of Sharpe ratios to help me understand it," they wrote.

Another said a vitamin deficiency they asked about a year ago continued to surface in responses, eventually prompting them to disable memory entirely.

A researcher noted that a single mention of an interpretability method they had worked on caused Claude to reference it in every subsequent project, regardless of relevance.

Several users described turning memory off as the most reliable workaround. "Every single offering sucks. It's better to start with a clean slate or use compartmentalized projects," one wrote.

The frustration spans languages and geographies. Replies in Japanese, Korean and Spanish echoed the same complaints, with one Japanese user noting that they had disabled memory across all AI products because the feature was more hindrance than help.

A training problem, not an implementation problem

In a follow-up post, Karpathy proposed an explanation rooted in how LLMs are trained rather than how any specific product implements memory. He noted that he cycles through all major LLMs and sees the same behavior across providers.

His hypothesis: during training, most information placed in the context window is genuinely relevant to the task at hand. This teaches models to treat everything in context as important. At inference time, when a memory system retrieves old conversation fragments via RAG (retrieval-augmented generation), the model applies that same learned bias, overfitting to whatever happens to appear, regardless of whether the user still cares about it.

This is consistent with an earlier observation Karpathy made about long context windows more broadly. In a February 2025 post about the "One Thread" approach to LLM conversations, he flagged that too many tokens competing for attention can decrease performance by diffusing the signal-to-noise ratio. The memory problem may be a specific case of a more general issue: models that struggle to distinguish high-signal information from noise when given a large context.

The timing matters

Karpathy's posts arrive at a moment when AI companies are doubling down on personalization as a competitive moat. The competitive logic is clear: the more an AI assistant knows about you, the harder it is to switch.

But the feedback loop Karpathy describes suggests that more memory does not automatically mean better memory. Without mechanisms to weight recent interests over stale ones, or to distinguish between a one-off question and a genuine ongoing interest, memory features risk making models less useful rather than more.

Disclaimer

This article was written with the assistance of Claude by Anthropic and Gemini by Google, as part of AI News Home's commitment to transparency in AI-assisted journalism. All analysis, conclusions, and editorial decisions were made by human editors. Read our Editorial Guidelines

Written by

Joseph Nordqvist

Joseph founded AI News Home in 2026. He studied marketing and later completed a postgraduate program in AI and machine learning (business applications) at UT Austin’s McCombs School of Business. He is now pursuing an MSc in Computer Science at the University of York.

View all articles →Was this useful?

More in Large Language Models

View all- Moonshot AI proposes new method for how LLM layers share information, claims 1.25x compute advantageMarch 16, 2026

- How ChatGPT and AlphaFold helped an Australian engineer design a personalized cancer vaccine for his dogMarch 16, 2026

- Claude's 1M context window is now generally available with no long-context pricing premiumMarch 14, 2026

- GPT-5.4 arrives with native computer use, 1M context, and a new tool search capabilityMarch 5, 2026

Related stories

Google releases Gemini 3.1 Flash-Lite

Google has released a preview of Gemini 3.1 Flash-Lite, positioning it as the fastest and most cost-efficient model in the Gemini 3 series.

March 3, 2026

Large Language ModelsAlibaba launches Qwen 3.5 small model series with sub-1B edge options

Alibaba's Qwen team has released four new small language models — Qwen3.5-0.8B, 2B, 4B, and 9B — alongside their base model counterparts, extending the Qwen3.5 architecture to compact, resource-efficient deployments.

March 2, 2026

IndustryClaude hits #1 on the US App Store after Pentagon dispute

After the Pentagon banned Anthropic for refusing to allow Claude to be used for mass surveillance and autonomous weapons, users responded by making Claude the #1 app on the US App Store, overtaking ChatGPT for the first time.

March 1, 2026

Large Language ModelsGemini 3.1 Pro claims top-tier reasoning gains

Google has released Gemini 3.1 Pro, a new version of its Pro model line that it says upgrades the core reasoning capabilities behind recent Gemini 3 advances.

February 19, 2026