Search is becoming a conversation, and Google just went first at scale

Written by Joseph Nordqvist/March 26, 2026 at 11:13 PM UTC

7 min read

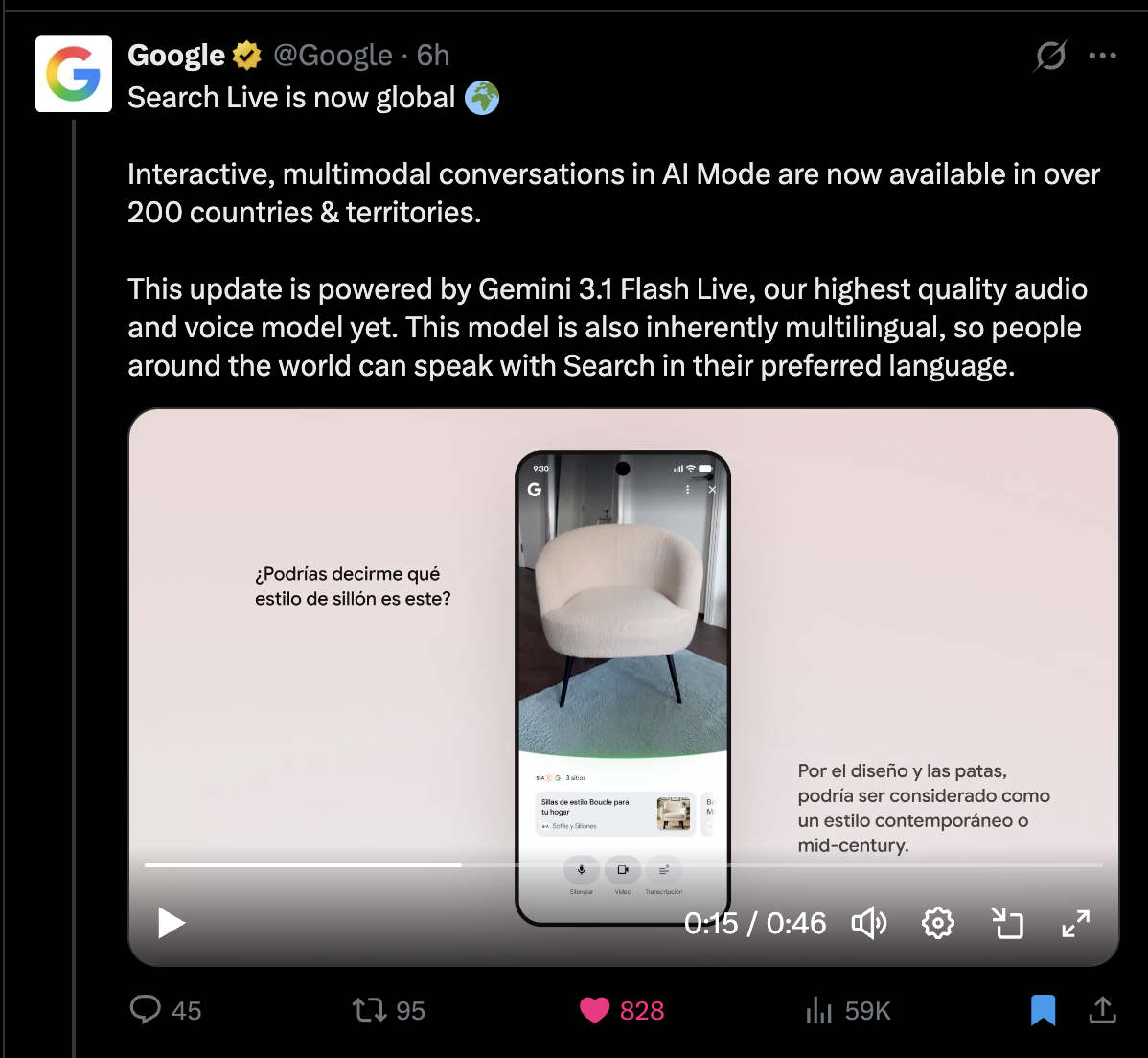

Google's expansion of Search Live to more than 200 countries this week is easy to frame as a product update. Its flagship AI voice model got an update and wider availability.

But zoom out and the move looks more significant: it is the first time any company has deployed conversational, multimodal search at truly global scale.

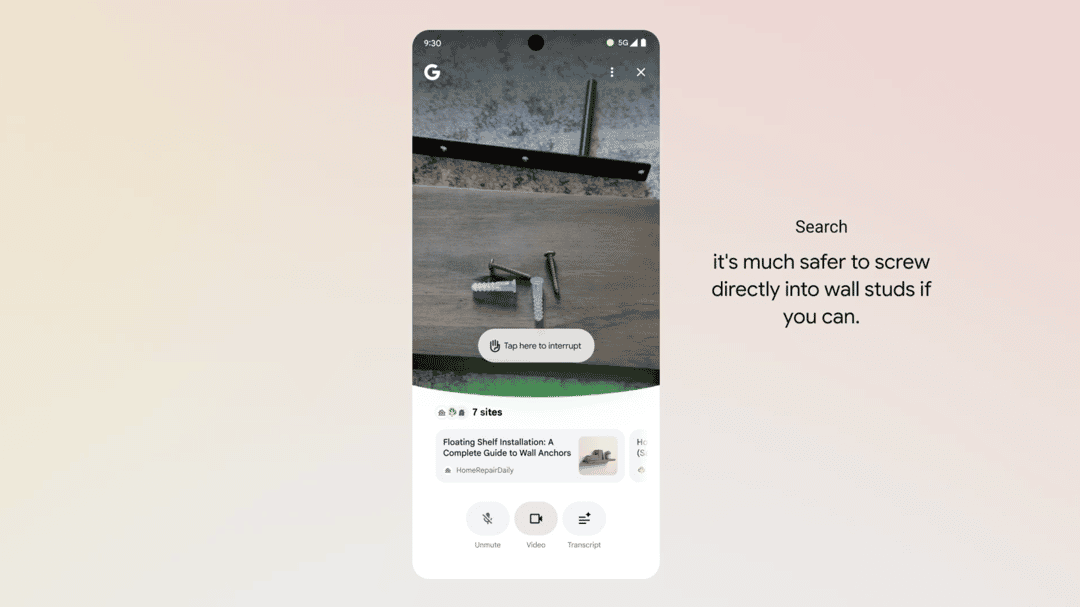

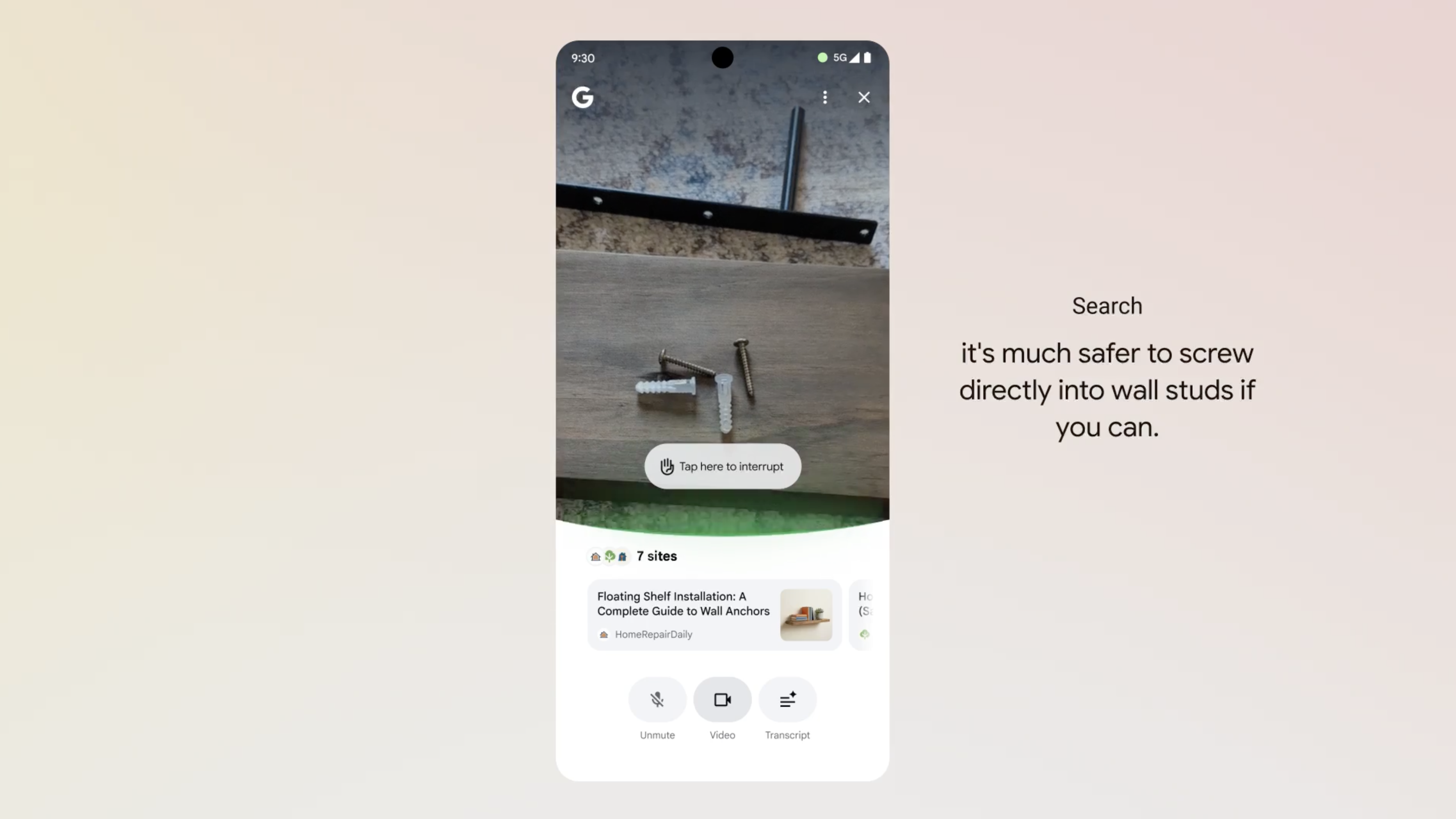

Search Live lets users point their phone camera at something, ask a question by voice, and have a back-and-forth dialogue with Google Search about what they are seeing.

It is powered by Gemini 3.1 Flash Live, Google's new real-time audio model, which supports over 90 languages. The feature was previously limited to a handful of markets. Now it is available everywhere Google's AI Mode operates.

Here is a video of Search Live, with voice, in action:

This matters because it changes what "searching" looks like.

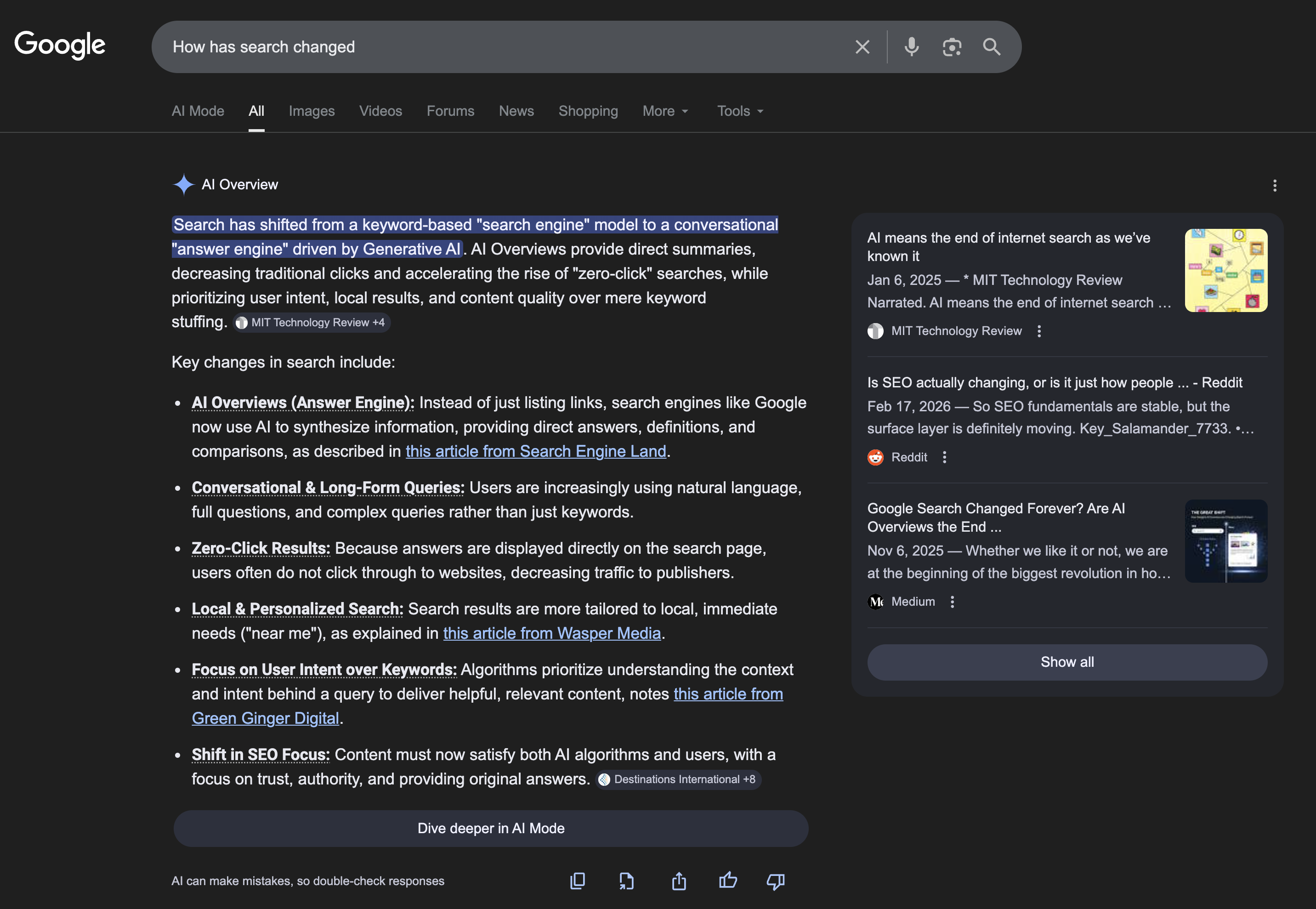

For two decades, search meant typing keywords into a box and scanning a list of blue links. AI Overviews (pictured below) began shifting that toward summarized answers, but the input was still text and the output was still a page.

Search Live essentially replaces both by making the input your voice and your camera. The output is a spoken conversation.

The search results page, the thing Google built its entire advertising business around, does not appear.

The competition is converging

Google is not alone in recognizing this shift, but it is the first to deploy it at this scale.

OpenAI recently improved the voice search capabilities of ChatGPT in February 2026, noting improved ability to follow user instructions and use tools like web search for better responses:

Perplexity also offers voice search in its main app and its Comet browser, including citations for its information.

Both are meaningful products, but neither have the sheer distribution advantage of Google. The fact that Search is already on every Android phone and most of the world's browsers, gives it a head start that is hard to replicate with a standalone app.

Google's approach integrates voice, live camera input, and web search into a single interaction built around Google's search infrastructure. ChatGPT also supports live camera sharing during voice conversations and can invoke web search, but these are general-purpose assistant capabilities rather than fully-fleshed out features of a dedicated search product. In Search Live, pointing a camera at an object can surface shopping results, web links, and visual matches alongside the spoken response, tying the visual context directly to Google's search capabilities.

What changes when search becomes a dialogue

The shift from text queries to spoken conversation is more just an interface change, as it rewrites several assumptions that the search industry has operated on for years.

Query structure changes. When people type, they use keywords. When they speak, they use natural sentences, follow-up questions, and references to what they said 30 seconds ago. Search systems built for keyword matching are not optimized for this. Systems built for multi-turn dialogue are.

The "results page" disappears. In a voice conversation, there is one answer, spoken aloud, possibly followed by a clarifying question. While it does attribute where it’s getting the information from (as seen in the image below) it compresses the information funnel dramatically. For users, it is more convenient (as long as the information it provides is valuable of course).

For publishers and advertisers who depend on appearing in search results, it raises hard questions about visibility and attribution.

Context becomes continuous. In traditional search, each query is independent. In a voice conversation, the fifth question depends on the answer to the fourth. The model needs to maintain state across the entire dialogue. Google says 3.1 Flash Live can follow a conversation thread for twice as long as its predecessor, which suggests this is an area where they are actively investing.

The business model question

The most interesting tension in conversational search is economic. In 2024, Google generated $265 billion in ad revenue, most of it from search ads that appear alongside query results. Wouldn’t a voice-first search interface that eliminate the results page also eliminates the primary surface for those ads?

Google has not said how it plans to monetize Search Live specifically. The company has been experimenting with ads in AI Overviews, but inserting advertising into a spoken conversation is a different and more delicate problem. Users are likely to have less tolerance for interruptions in a dialogue than they do for sponsored links on a page.

This is not a problem unique to Google. Any company building conversational search, whether OpenAI, Perplexity, or anyone else, faces the same question: if the answer is the product, where does the revenue come from?

What to watch

The real test is whether people actually use voice as their primary way to search, or whether it remains a secondary mode for specific situations like driving or cooking.

Google has the distribution to find out. And perhaps that’s what it is doing: testing traction. With Search Live now available globally, the company will have data within months on whether conversational search is something billions of people want or something that demos well but does not change daily behavior. That data will shape not just Google's product roadmap but the strategic assumptions of every company building in this space.

For now, the safest observation is that the interface of search is fragmenting. Text boxes, AI summaries, voice conversations, and camera-based queries are all becoming valid ways to find information. Google's decision to roll out Search Live to more markets is that voice and vision will not just coexist with text search but eventually become the default for a significant share of queries.

This week's global launch is the beginning of that experiment.

Editorial Transparency

This article was produced with the assistance of AI tools as part of our editorial workflow. All analysis, conclusions, and editorial decisions were made by human editors. Read our Editorial Guidelines

References

- 1.

Gemini 3.1 Flash Live: Making audio AI more natural and reliable — Valeria Wu,Yifan Ding, Google, March 26, 2026

Primary - 2.

Build real-time conversational agents with Gemini 3.1 Flash Live — Alisa Fortin,Thor Schaeff, Google, March 26, 2026

Primary - 3.

Alphabet Inc. Q4 2024 earnings (Form 8-K), Alphabet Inc. / SEC, February 4, 2025

Google advertising revenue summed across Q1-Q4 2024 SEC filings: approximately $264.6 billion

Primary - 4.

- 5.

Was this useful?

More in Analysis

View all- Musk sets March 21 for Tesla's Terafab chip factoryMarch 16, 2026

- Google DeepMind celebrates AlphaGo's 10th anniversary as Pentagon AI crisis consumes the industryMarch 11, 2026

- The pressure test: What February 2026 revealed about AI's safety promisesMarch 2, 2026

- Amazon invests $50 billion in OpenAI as part of $110 billion funding roundFebruary 27, 2026

Related stories

Musk sets March 21 for Tesla's Terafab chip factory

Elon Musk set a March 21 date for what may well be one of the most ambitious semiconductor ventures ever attempted by a company outside the established chipmaking industry

March 16, 2026

AnalysisGoogle DeepMind celebrates AlphaGo's 10th anniversary as Pentagon AI crisis consumes the industry

Google DeepMind CEO Demis Hassabis published a warm retrospective about AlphaGo's 10th anniversary in what may very well be an act of narrative positioning at a moment when the rest of the industry is consumed by the question of who AI serves.

March 11, 2026

OpinionThe pressure test: What February 2026 revealed about AI's safety promises

In one week, Anthropic dismantled its hardest safety promise and absorbed a federal blacklisting rather than cross its ethical red lines. Both things are true. That's the story.

March 2, 2026

IndustryAmazon invests $50 billion in OpenAI as part of $110 billion funding round

OpenAI announced $110 billion in new investment at a $730 billion pre-money valuation, anchored by $50 billion from Amazon, $30 billion from SoftBank, and $30 billion from NVIDIA. The Amazon commitment comes with a broad strategic partnership covering cloud infrastructure, custom silicon, and enterprise AI distribution.

February 27, 2026